If you follow the world of Artificial Intelligence, you have probably heard of the famous 2017 paper by Google researchers called "Attention Is All You Need". It was a key point in the development of generative AI. It introduced the "Transformer" architecture, which powers ChatGPT and the modern Generative AI revolution.

But as I read about how these machines "think," I realised something profound and, at the same time, very relatable. To get smarter and faster, AI needed to stop trying to process language the way schools teach students to read word by word and line by line and start processing information the way I do.

Let's look at the before-and-after impact of this paper through the lens of my own dyslexic brain.

The Old Way: The Struggle of Sequence

Before the "Attention" paper, AI models (specifically Recurrent Neural Networks, or RNNs) read sentences sequentially. To understand word #10, they had to hold the memory of word #1, word #2, and so on. By the time they got to the end of a long paragraph, they often "forgot" the context of the beginning. It was computationally expensive, slow, and prone to error.

This is precisely what reading feels like for me.

When I try to read phonetically, decoding letters in a strict line, my working memory gets overloaded 🤯. By the time I decode the end of the sentence, I have lost the thread of the beginning. As researcher Dr Sally Shaywitz notes, dyslexia is fundamentally a difficulty in retrieving these phonological codes quickly (Shaywitz, 2003).

Before the paper, the “old way” of interpreting language by computers had a bottleneck. Through countless false starts and well-meaning but often painful feedback, I've realised that my brain has the same bottleneck.

The New Way: The Power of "Self-Attention"

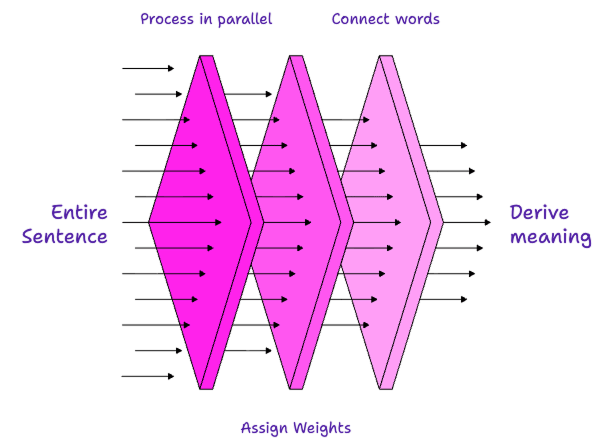

The breakthrough in Attention Is All You Need (Vaswani et al., 2017) was that the researchers stopped forcing the computer to read left-to-right. Instead, they created a mechanism called Self-Attention.

The model looks at the entire sentence at once (parallel processing).

It assigns a "weight" (importance) to specific words.

It connects words based on their relationship and context, regardless of how far apart they are in the sentence.

"Self-attention, sometimes called intra-attention, is an attention mechanism relating different positions of a single sequence to compute a representation of the sequence."

My "Transformer" Brain

This nonlinear approach to understanding language is how I survive and thrive in the world. I don't read linearly. Instead, my transformer brain is using my own biological self-attention process.

When I look at a page, I don't see a string of letters. I see a cloud of information. This is probably why one of my pet hates is the interchangeable use of "data" and "information" as if they were the same thing.

“The distinction between data and information is subtle but it is also the root of some of the more difficult problems in security. Data represents information. Information is the (subjective) interpretation of data.”

My eyes scan the page, picking out the "high-weight" words (keywords, concepts, distinct shapes) and ignoring the "low-weight" words (articles, conjunctions). If I try to read each word, it overloads me. Instead, my brain builds a holistic picture of the meaning without decoding every syllable.

Dyslesic Language Processing

It’s Not a Bug, It’s an Architecture

For years, I felt like a broken computer. But the success of Transformer models shows that sequential processing isn't the only way to derive context and meaning from language.

In fact, for complex tasks, parallel processing (doing it all at once) can often give results that are broader and make connections between other topics and themes more quickly. This can be great for creativity, though it can also be a challenge when trying to get into the minute details.

Drs. Brock and Fernette Eide, authors of The Dyslexic Advantage, argue that the dyslexic brain is wired for this exact kind of "big picture" thinking. They describe "Interconnected Reasoning" as a primary strength of the dyslexic mind—the ability to see connections between distant concepts that others miss (Eide and Eide, 2011).

This distinction perfectly mirrors the shift in AI architecture:

Sequential Thinkers process data like a traditional computer: precise, linear, step-by-step.

Dyslexic Thinkers process data like a Transformer: contextual, pattern-based, and holistic.

Conclusion

We spend a lot of time teaching children to be better "sequential processors." While reading skills are essential, we should also recognise that their hardware might be optimised for a different form of processing and seek techniques to help them process language more efficiently.

For me, "Attention Is All You Need" wasn't just one of the most cited research papers on artificial intelligence. It shed light on how I navigate a complex world and gave me the confidence to realise that while I might not decode every letter, I can interpret context and meaning in my own way. I don't need individual letters; I just need an understanding of the bigger patterns and the themes to derive context and meaning.

References

Eide, B. and Eide, F. (2011) The Dyslexic Advantage: Unlocking the Hidden Potential of the Dyslexic Brain. New York: Hudson Street Press.

Gollmann, D. (2011) Computer Security. Hoboken: John Wiley & Sons.

Shaywitz, S. (2003) Overcoming Dyslexia: A New and Complete Science-Based Program for Reading Problems at Any Level. New York: Alfred A. Knopf.

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, Ł. and Polosukhin, I. (2017) 'Attention Is All You Need', Advances in Neural Information Processing Systems, 30. Available at: https://arxiv.org/abs/1706.03762 (Accessed: 30 November 2024).